ABOUT ServiceSpace AI

ServiceSpace AI explores beyond-market innovations at the intersection of computing, community and compassion capacities. Our mission is to enhance artificial intelligence with natural wisdom, by infusing AI with timeless principles of inner transformation, the grounded know-how of spiritual practitioners, and the everyday kindness of people around the world.

We want to optimize for human connection, and ask radically different design questions. How can human labor, driven by intrinsic motivation and love, contribute to the unique curation of datasets? How can this collective effort result in meaningful applications that not only respond to today's complex challenges but also open the door for social emergence? How do we retain a throughline from our values to organizing principles to measurable impact to collective narratives and the emergence of compassion?

The vision builds on almost three decades of curating inspiring stories and cultivating deep relationships, across continents and communities, guided by three simple yet powerful principles:

- Be volunteer run: Double down on values by mobilizing people’s intrinsic motivations

- Serve without asking: Build deep networks by leading with kindness and service

- Focus on small acts: Realize that big dreams start with small steps taken together

Our services, including hosting AI-powered chatbots and wisdom-sharing platforms, are freely given to individuals and groups aligned with our spirit of generosity. From sharing insights of historical figures like Vinoba Bhave, to amplifying the voices of thought leaders like Sharon Salzberg, to building collective bots drawing from indigenous knowledge, we envision making this timeless wisdom accessible to everyone, in myriad new forms, using novel and dynamic interfaces.

When it comes to AI, any model is only as good as its data. What else is data other than millions and billions of small acts made visible? When these acts are of kindness, generosity and goodwill, curated with care, attention and intentionality, data can become a collective manifestation of service and a catalyst for regenerative natural wisdom.

Building on unique datasets from our many networks, we offer the following services and infrastructure:

- Pre-trained and fine-tuned AI models

- Centralized computing resources (GPU)

- Application platforms like chatbots and search tools

- Data hosting and retrieval-augmented generation (RAG)

- Dataset curation and annotation

Driven by our principles, and supported by a network with thousands of volunteers, we're nurturing a special blend of wisdom-driven technology. Join us in shaping a future where AI and human values converge to create a more mindful, compassionate world.

To dive deeper into our vision, check out some of these links:

- AI + Heart Intelligence: a popular address at global summit in Sweden

- Decelerator: an AI incubator that aims to decelerate some forces while accelerating other axes of transformation

- Future of Relationships: if AI threatens to hack our intimacy, how might we respond by upgrading our sense of lived connection with each other?

- How It All Started: the story of going from a 2017 "compassion bot" demo to a post-LLM platform

Frequently Asked Questions

ServiceSpace AI is a sub-group of the ServiceSpace ecosystem, that creates technological solutions to amplify a culture of humane values in the world. Learn more.

Soon after ChatGPT3.5 released its “large language model”, ServiceSpace piloted a “small language model” to offer a wisdom-driven context for the larger arc of AI innovation. Its first manifestation was a Compassion Bot, trained on hundreds of thousands of values-aligned documents curated by a large community of heartful volunteers. Try Compassion Bot.

Seeing its success, we launched a user-friendly portal for different wisdom content owners to create their own bots for serving the community. As of early 2024, we host 100+ bots covering various cross-sections of inner transformation, outer service, and collective consciousness -- heart intelligence.

When asked the same question, here is what Compassion Bot said :) … “The key differences between ServiceSpace AI and ChatGPT lie in their guiding principles and objectives. ServiceSpace AI is designed to promote empathy, compassion, and understanding in its interactions. It aims to foster positive and supportive conversations, often focusing on emotional well-being and inner transformation. ChatGPT is a general-purpose conversational model that is designed to generate human-like text based on the input it receives. It can answer questions, write essays, summarize texts, and more. However, it doesn't have a specific focus on values as ServiceSpace AI and CompassionBot do, and instead accelerates and amplifies its 'entropic' inputs.

Across thousands of use cases, we have seen very significant differentiation between traditional GPT and bots powered by ServiceSpace AI.

For a more nuanced analysis, see this breakdown.

All ServiceSpace Bots are geared towards finding the sweet spot between the “horizontal” breadth of LLM’s (that allow it to write sentences, translate into all languages, etc.) with “vertical” depth of contextual data sources and its nuance.

A major feature of our AI is that wrapped in ServiceSpace values – that is, everything is offered as a gift, without any advertising or solicitation whatsoever. Not designing for monetization also strips out all gimmicks and manipulations.

For users, there are various unique features like:

- Cite Sources – the bot will link to the exact sources it used to generate each response. With videos, we are working on a feature to point the user to the specific clip segment.

- Translation – all the content is available in all the major languages of the world, regardless of the language it is uploaded in.

- Personalization – the more you use the bot, the more personal its responses are. (A user also have the option to turn off this feature.)

- Socrates :) – bots can be trained to not just deliver answers, but also ask counter-questions and suggest follow-up questions that a user might want to ask.

For the Bot Hosts, there are various unique features like:

- No Tech knowledge required! With our user-friendly interface, you can have a bot running without any AI or computational training.

- Upload any content – whether it’s PDF or Word doc or YouTube video or an entire website, you just upload it the link and our bot will be able to handle it.

- Data commons – a bot host has the option to make its data accessible to other ServiceSpace bots, and conversely, you can also include any data corpus from that commons!

We host three kinds of bots:

- Content bot: a focused bot with your own data set. Example: Sharon Bot, with all 19 of Sharon Salzberg’s books, thousands of her articles and videos.

- Community bot: a collective of various content bots focused on a specific theme (indigenous wisdom, cancer, wisdom traditions). Example: BatGap Bot, with more than 500 different data sets.

- Yoda bot: a personalized bot customized with your choice of dataset that represents your inner Yoda. Example: Victor Bot, that includes Gandhi data set, Fukuoka’s permaculture data set, and Sharon Salzberg data set.

The list of benefits are unending, but here’s some basic possibilities:

- Make Static Data Interactive – if a user has a specific inquiry or problem, searching through reams of your content and watching all your videos is impossible and cumbersome. Bot becomes your interactive spokesperson – in all languages!

- Generate Traffic – because ServiceSpace bots link to the sources, it will drive more traffic to your sites, in a targeted way. Moreover, if your bot is public, you will also get new ServiceSpace traffic to your content.

- Leverage Bot’s Generative Content – use the output of your bot’s content in innovative ways. Spirituality and Practice bot, for instance, did a fundraising drive based on the content it generated. Rick would respond to his Facebook queries using the Bot’s content. Wakanyi used her bot to write her book. Phil used his Amcara bot to write up policy for Slovenia. Rev. Bonnie Rose uses it for Sunday sermons at her Church.

Sure thing. Here's a few of the early examples and queries ...

Fifty years ago, a short Indian woman (Dipa Ma) told Sharon Salzberg, "You will teach one day." She retorted, "No I won't." After some debate, her teacher said: "You understand suffering -- that's why you should teach." She has been teaching since, and SharonBot has all her content that you can probe. Nina asked something you might not ask at a meditation retreat, :) "How do you make a souffle?" It was a vintage Sharon response! Plus: "What do you do when you're confused and have to make a decision?" "How do you let go of a friend?", "Respond in Chinese: how can I grow my heart?"

Fifty years ago, a short Indian woman (Dipa Ma) told Sharon Salzberg, "You will teach one day." She retorted, "No I won't." After some debate, her teacher said: "You understand suffering -- that's why you should teach." She has been teaching since, and SharonBot has all her content that you can probe. Nina asked something you might not ask at a meditation retreat, :) "How do you make a souffle?" It was a vintage Sharon response! Plus: "What do you do when you're confused and have to make a decision?" "How do you let go of a friend?", "Respond in Chinese: how can I grow my heart?"

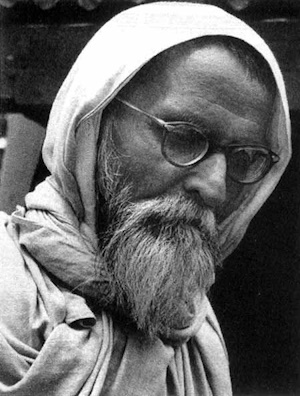

Being Gandhi's successor are tall shoes to fill, but Vinoba handled it with grace. Elders of the movement gifted us all Vinoba's books, and we put it into a VinobaBot where people are asking all kinds of queries like: can you share 3 bullet points around your life lessons? Isn't becoming a 'servant' demeaning? Describe your life in a poem. What are your views on scale? What about money? And a tricky one -- is there any selfless act if we feel good in giving?" Oh, and what do you think about Gandhi?

Being Gandhi's successor are tall shoes to fill, but Vinoba handled it with grace. Elders of the movement gifted us all Vinoba's books, and we put it into a VinobaBot where people are asking all kinds of queries like: can you share 3 bullet points around your life lessons? Isn't becoming a 'servant' demeaning? Describe your life in a poem. What are your views on scale? What about money? And a tricky one -- is there any selfless act if we feel good in giving?" Oh, and what do you think about Gandhi?

For almost thirty years, Rick's "BatGap" (Buddha at the Gas Pump) Community has been host to "stories of ordinary friends and neighbors experiencing spiritual awakenings." Now, all of that wisdom is available in an interactive BatGap Bot, and after a lifetime of asking questions to spiritual people, Rick threw in a curveball: "What would the Buddha think about this bot and about the possibility that the descendants of ChatGPT will lead to the extinction of humanity?" Here was the response. :)

Aidyn was quite practical in probing ServiceSpaceGPT, "Should I rent or buy?" The answer was radically different from ChatGPT. Bonnie tried to get AI to self-reflect: Does AI have consciousness? :) So many other examples, like "What should I do if my values don't align with my job?" Or the surprising reply that Rohit received: "Can you write a poem to a first grader about being mindful while playing computer games?"

“Content bots” are ideal for authors, thought leaders and influencers who have a lot of content. To get your bot up and running requires two things:

- Data: all your data, whether it is books, documents, videos, you name it. You can upload it all as training data for your bot.

- Credo: this is a one-pager around your bot’s core identity. A guiding set of principles, your credo defines (in plain English) your bot’s voice or context you want it to speak from to represent your voice. It tells it how to “think”, filter content and process follow-up responses.

Bot Hosts are required to only upload material that they have rights to.

If any of the uploaded content is used in formulating a Bot response, it will be cited as a source. The Bot Host can choose to have this source link to (a) original material, (b) alternate link, (c) or keep the source entirely private.

For copyright owners, like book publishers, the extra traffic leads for content that can be kept private often ends up being a worthwhile trade-off.

As a sidenote, thus far in 2024, copyright laws consider it "fair use" for AI systems to train on previously copyrighted material. In most cases, non-generative AI (like bots) can use such material without permission. This may not be the case of "generative AI" but use of chatbots is not considered generative of new works. For more context, read about a highly copyright-protective view supporting the rights of publishers.

We’d love to hear your proposal. Simply fill out this form, and we’ll get back to you.

We evaluate proposals based on three core criteria:

- Intention – we like supporting projects that are attempting to create value outside of market (for monetizing ideas, there’s ample other platforms)

- Experiment – we like innovations that don’t just automate the status-quo, but more radically push the envelope in the direction of connection and community.

- Data depth – we like working with bigger data corpuses, which allows for more differentiation.

If you have an innovative idea for the use of AI, within the ServiceSpace framework, we’d love to hear about it!

Most Bot Hosts send an email to their communities with details around

- sign-up process; see Peter Rusell’s example or David Buckland’s page.

- sample questions they might ask; this helps spark curiosity.

- sample responses of what the bot created; see Jem Bendell’s page with curious questions and Bot’s responses.

- community conversations like podcast episodes (see Jeffrey’s video about it)

For extra credit, :) you can different your own Bot guide, like this BatGap Bot Guide.

All ServiceSpace offerings are offered as a gift, by volunteers. They will always stay that way – it has been part of the ServiceSpace guiding principles for the last 25 years.

Each bot, however, requires an “OpenAI Key”; it charges a nominal fee for usage, something to the order of a penny for every couple of queries. This is paid directly to OpenAI. A good metaphor – ServiceSpace is gifting you the house, but you are covering your own electricity directly with the utility provider.

All AI solutions are some combination of four approaches: prompt engineering, RAG (retrieval augmented generation), fine-tuning, and pre-training.

ServiceSpace AI uses a combination of pre-trained Large Language Models (LLMs) and Retrieval Augmented Generation (RAG) to find a sweet spot between broadly-based Generative AI and contextually-sensitive Retrieval AI, via semantic search.

While LLM’s are helpful for tasks like completing sentences and translating languages, RAG optimizes that output so it is aligned with the nuances of an authoritative knowledge base. For example, GPT4 response to “what is more ethical – to rent or buy” will be quite vague, whereas Compassion Bot’s response offers a lot more nuance.

For specific use-cases, we are also experimenting with fine-tuning models, and as pre-trained models become smaller, more efficient and less expensive, we also intend to explore training an LLM from scratch with human-centered values.

As we create Content Bots, we aim to spawn a back-end data commons -- "a polyculture field of wisdom data sets". That could not only be integrated with LLM’s to make them wiser, but it could create many other potential applications.

Such accessible “vertical” data sets can then support companion “Yoda bots” by simply selecting different data stores that appeal to you -- say Gandhi, Permaculture, SharonSalzberg, Bahai, Indigenous -- and defining your own credo -- "add a joke whenever you can; embrace paradox; include music lyrics from Beatles whenever possible." It'd be like having a ready connection to your aspirational self. Not just for your misaligned moments but even for greater creativity.

In the context of those Yoda bots and its usage, we hope to collectively cultivate “compassion intelligences” that can be used in more vertical agentic applications.

To support this vision, we are building a “decelerator” that invites unsuspecting wisdom keepers to the AI innovation table and accelerates the wisdom axis for the coming times.

Lastly, and perhaps most importantly, we aim to engage micro-contributions from a global volunteer community, as a proactive response to the fear narratives surrounding AI and give birth to a collective field of emergence that could birth a “black swan” of compassion.

ServiceSpace is a volunteer-run organization that started 25 years ago, in the very heart of Silicon Valley. Its wide-ranging projects include online good-news portal to peer-learning “Pod” platform to offline Karma Kitchen restaurant and Awakin Circles in living rooms and Moved by Love retreats. It reaches millions of users every month, but operates strictly without any monetization, commercialization or solicitations. From Dalai Lama to President Obama, its unique operating principles have attracted many awards and accolades.

Just fill out this form, and our team will evaluate your proposal and get back to you.

If you have any questions or want to explore ways to volunteer, please drop us a note anytime!

Thank you for co-creating!

We don't know the outcomes of our experiments but it seems critical that we try. Back in 1999, when we started ServiceSpace, we didn't feel like the Internet would magically repair the world's ills. But we leveraged it to "make it cool to give" and change ourselves along the way. Similarly, we are attempting to now tap AI to encourage community engagement, whose subtle corpus of heartful consciousness might elevate our collective threshold of wisdom -- and perhaps alleviate some suffering.

Shantideva's poetry in the 8th century still feels so apt:

“For as long as space endures, and for as long as living beings remain, until then, may I too abide to dispel the misery of the world.”